mirror of

https://github.com/opencloud-eu/opencloud.git

synced 2026-05-03 09:20:50 -05:00

switch to go vendoring

This commit is contained in:

+1

@@ -0,0 +1 @@

|

||||

*.test

|

||||

+30

@@ -0,0 +1,30 @@

|

||||

linters-settings:

|

||||

golint:

|

||||

min-confidence: 0

|

||||

|

||||

misspell:

|

||||

locale: US

|

||||

|

||||

linters:

|

||||

disable-all: true

|

||||

enable:

|

||||

- typecheck

|

||||

- goimports

|

||||

- misspell

|

||||

- govet

|

||||

- golint

|

||||

- ineffassign

|

||||

- gosimple

|

||||

- deadcode

|

||||

- unparam

|

||||

- unused

|

||||

- structcheck

|

||||

|

||||

issues:

|

||||

exclude-use-default: false

|

||||

exclude:

|

||||

- should have a package comment

|

||||

- error strings should not be capitalized or end with punctuation or a newline

|

||||

- should have comment # TODO(aead): Remove once all exported ident. have comments!

|

||||

service:

|

||||

golangci-lint-version: 1.20.0 # use the fixed version to not introduce new linters unexpectedly

|

||||

+202

@@ -0,0 +1,202 @@

|

||||

|

||||

Apache License

|

||||

Version 2.0, January 2004

|

||||

http://www.apache.org/licenses/

|

||||

|

||||

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

||||

|

||||

1. Definitions.

|

||||

|

||||

"License" shall mean the terms and conditions for use, reproduction,

|

||||

and distribution as defined by Sections 1 through 9 of this document.

|

||||

|

||||

"Licensor" shall mean the copyright owner or entity authorized by

|

||||

the copyright owner that is granting the License.

|

||||

|

||||

"Legal Entity" shall mean the union of the acting entity and all

|

||||

other entities that control, are controlled by, or are under common

|

||||

control with that entity. For the purposes of this definition,

|

||||

"control" means (i) the power, direct or indirect, to cause the

|

||||

direction or management of such entity, whether by contract or

|

||||

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

||||

outstanding shares, or (iii) beneficial ownership of such entity.

|

||||

|

||||

"You" (or "Your") shall mean an individual or Legal Entity

|

||||

exercising permissions granted by this License.

|

||||

|

||||

"Source" form shall mean the preferred form for making modifications,

|

||||

including but not limited to software source code, documentation

|

||||

source, and configuration files.

|

||||

|

||||

"Object" form shall mean any form resulting from mechanical

|

||||

transformation or translation of a Source form, including but

|

||||

not limited to compiled object code, generated documentation,

|

||||

and conversions to other media types.

|

||||

|

||||

"Work" shall mean the work of authorship, whether in Source or

|

||||

Object form, made available under the License, as indicated by a

|

||||

copyright notice that is included in or attached to the work

|

||||

(an example is provided in the Appendix below).

|

||||

|

||||

"Derivative Works" shall mean any work, whether in Source or Object

|

||||

form, that is based on (or derived from) the Work and for which the

|

||||

editorial revisions, annotations, elaborations, or other modifications

|

||||

represent, as a whole, an original work of authorship. For the purposes

|

||||

of this License, Derivative Works shall not include works that remain

|

||||

separable from, or merely link (or bind by name) to the interfaces of,

|

||||

the Work and Derivative Works thereof.

|

||||

|

||||

"Contribution" shall mean any work of authorship, including

|

||||

the original version of the Work and any modifications or additions

|

||||

to that Work or Derivative Works thereof, that is intentionally

|

||||

submitted to Licensor for inclusion in the Work by the copyright owner

|

||||

or by an individual or Legal Entity authorized to submit on behalf of

|

||||

the copyright owner. For the purposes of this definition, "submitted"

|

||||

means any form of electronic, verbal, or written communication sent

|

||||

to the Licensor or its representatives, including but not limited to

|

||||

communication on electronic mailing lists, source code control systems,

|

||||

and issue tracking systems that are managed by, or on behalf of, the

|

||||

Licensor for the purpose of discussing and improving the Work, but

|

||||

excluding communication that is conspicuously marked or otherwise

|

||||

designated in writing by the copyright owner as "Not a Contribution."

|

||||

|

||||

"Contributor" shall mean Licensor and any individual or Legal Entity

|

||||

on behalf of whom a Contribution has been received by Licensor and

|

||||

subsequently incorporated within the Work.

|

||||

|

||||

2. Grant of Copyright License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

copyright license to reproduce, prepare Derivative Works of,

|

||||

publicly display, publicly perform, sublicense, and distribute the

|

||||

Work and such Derivative Works in Source or Object form.

|

||||

|

||||

3. Grant of Patent License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

(except as stated in this section) patent license to make, have made,

|

||||

use, offer to sell, sell, import, and otherwise transfer the Work,

|

||||

where such license applies only to those patent claims licensable

|

||||

by such Contributor that are necessarily infringed by their

|

||||

Contribution(s) alone or by combination of their Contribution(s)

|

||||

with the Work to which such Contribution(s) was submitted. If You

|

||||

institute patent litigation against any entity (including a

|

||||

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

||||

or a Contribution incorporated within the Work constitutes direct

|

||||

or contributory patent infringement, then any patent licenses

|

||||

granted to You under this License for that Work shall terminate

|

||||

as of the date such litigation is filed.

|

||||

|

||||

4. Redistribution. You may reproduce and distribute copies of the

|

||||

Work or Derivative Works thereof in any medium, with or without

|

||||

modifications, and in Source or Object form, provided that You

|

||||

meet the following conditions:

|

||||

|

||||

(a) You must give any other recipients of the Work or

|

||||

Derivative Works a copy of this License; and

|

||||

|

||||

(b) You must cause any modified files to carry prominent notices

|

||||

stating that You changed the files; and

|

||||

|

||||

(c) You must retain, in the Source form of any Derivative Works

|

||||

that You distribute, all copyright, patent, trademark, and

|

||||

attribution notices from the Source form of the Work,

|

||||

excluding those notices that do not pertain to any part of

|

||||

the Derivative Works; and

|

||||

|

||||

(d) If the Work includes a "NOTICE" text file as part of its

|

||||

distribution, then any Derivative Works that You distribute must

|

||||

include a readable copy of the attribution notices contained

|

||||

within such NOTICE file, excluding those notices that do not

|

||||

pertain to any part of the Derivative Works, in at least one

|

||||

of the following places: within a NOTICE text file distributed

|

||||

as part of the Derivative Works; within the Source form or

|

||||

documentation, if provided along with the Derivative Works; or,

|

||||

within a display generated by the Derivative Works, if and

|

||||

wherever such third-party notices normally appear. The contents

|

||||

of the NOTICE file are for informational purposes only and

|

||||

do not modify the License. You may add Your own attribution

|

||||

notices within Derivative Works that You distribute, alongside

|

||||

or as an addendum to the NOTICE text from the Work, provided

|

||||

that such additional attribution notices cannot be construed

|

||||

as modifying the License.

|

||||

|

||||

You may add Your own copyright statement to Your modifications and

|

||||

may provide additional or different license terms and conditions

|

||||

for use, reproduction, or distribution of Your modifications, or

|

||||

for any such Derivative Works as a whole, provided Your use,

|

||||

reproduction, and distribution of the Work otherwise complies with

|

||||

the conditions stated in this License.

|

||||

|

||||

5. Submission of Contributions. Unless You explicitly state otherwise,

|

||||

any Contribution intentionally submitted for inclusion in the Work

|

||||

by You to the Licensor shall be under the terms and conditions of

|

||||

this License, without any additional terms or conditions.

|

||||

Notwithstanding the above, nothing herein shall supersede or modify

|

||||

the terms of any separate license agreement you may have executed

|

||||

with Licensor regarding such Contributions.

|

||||

|

||||

6. Trademarks. This License does not grant permission to use the trade

|

||||

names, trademarks, service marks, or product names of the Licensor,

|

||||

except as required for reasonable and customary use in describing the

|

||||

origin of the Work and reproducing the content of the NOTICE file.

|

||||

|

||||

7. Disclaimer of Warranty. Unless required by applicable law or

|

||||

agreed to in writing, Licensor provides the Work (and each

|

||||

Contributor provides its Contributions) on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

||||

implied, including, without limitation, any warranties or conditions

|

||||

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

||||

PARTICULAR PURPOSE. You are solely responsible for determining the

|

||||

appropriateness of using or redistributing the Work and assume any

|

||||

risks associated with Your exercise of permissions under this License.

|

||||

|

||||

8. Limitation of Liability. In no event and under no legal theory,

|

||||

whether in tort (including negligence), contract, or otherwise,

|

||||

unless required by applicable law (such as deliberate and grossly

|

||||

negligent acts) or agreed to in writing, shall any Contributor be

|

||||

liable to You for damages, including any direct, indirect, special,

|

||||

incidental, or consequential damages of any character arising as a

|

||||

result of this License or out of the use or inability to use the

|

||||

Work (including but not limited to damages for loss of goodwill,

|

||||

work stoppage, computer failure or malfunction, or any and all

|

||||

other commercial damages or losses), even if such Contributor

|

||||

has been advised of the possibility of such damages.

|

||||

|

||||

9. Accepting Warranty or Additional Liability. While redistributing

|

||||

the Work or Derivative Works thereof, You may choose to offer,

|

||||

and charge a fee for, acceptance of support, warranty, indemnity,

|

||||

or other liability obligations and/or rights consistent with this

|

||||

License. However, in accepting such obligations, You may act only

|

||||

on Your own behalf and on Your sole responsibility, not on behalf

|

||||

of any other Contributor, and only if You agree to indemnify,

|

||||

defend, and hold each Contributor harmless for any liability

|

||||

incurred by, or claims asserted against, such Contributor by reason

|

||||

of your accepting any such warranty or additional liability.

|

||||

|

||||

END OF TERMS AND CONDITIONS

|

||||

|

||||

APPENDIX: How to apply the Apache License to your work.

|

||||

|

||||

To apply the Apache License to your work, attach the following

|

||||

boilerplate notice, with the fields enclosed by brackets "[]"

|

||||

replaced with your own identifying information. (Don't include

|

||||

the brackets!) The text should be enclosed in the appropriate

|

||||

comment syntax for the file format. We also recommend that a

|

||||

file or class name and description of purpose be included on the

|

||||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||

Copyright [yyyy] [name of copyright owner]

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

+99

@@ -0,0 +1,99 @@

|

||||

[](https://godoc.org/github.com/minio/highwayhash)

|

||||

[](https://travis-ci.org/minio/highwayhash)

|

||||

|

||||

## HighwayHash

|

||||

|

||||

[HighwayHash](https://github.com/google/highwayhash) is a pseudo-random-function (PRF) developed by Jyrki Alakuijala, Bill Cox and Jan Wassenberg (Google research). HighwayHash takes a 256 bit key and computes 64, 128 or 256 bit hash values of given messages.

|

||||

|

||||

It can be used to prevent hash-flooding attacks or authenticate short-lived messages. Additionally it can be used as a fingerprinting function. HighwayHash is not a general purpose cryptographic hash function (such as Blake2b, SHA-3 or SHA-2) and should not be used if strong collision resistance is required.

|

||||

|

||||

This repository contains a native Go version and optimized assembly implementations for Intel, ARM and ppc64le architectures.

|

||||

|

||||

### High performance

|

||||

|

||||

HighwayHash is an approximately 5x faster SIMD hash function as compared to [SipHash](https://www.131002.net/siphash/siphash.pdf) which in itself is a fast and 'cryptographically strong' pseudo-random function designed by Aumasson and Bernstein.

|

||||

|

||||

HighwayHash uses a new way of mixing inputs with AVX2 multiply and permute instructions. The multiplications are 32x32 bit giving 64 bits-wide results and are therefore infeasible to reverse. Additionally permuting equalizes the distribution of the resulting bytes. The algorithm outputs digests ranging from 64 bits up to 256 bits at no extra cost.

|

||||

|

||||

### Stable

|

||||

|

||||

All three output sizes of HighwayHash have been declared [stable](https://github.com/google/highwayhash/#versioning-and-stability) as of January 2018. This means that the hash results for any given input message are guaranteed not to change.

|

||||

|

||||

### Installation

|

||||

|

||||

Install: `go get -u github.com/minio/highwayhash`

|

||||

|

||||

### Intel Performance

|

||||

|

||||

Below are the single core results on an Intel Core i7 (3.1 GHz) for 256 bit outputs:

|

||||

|

||||

```

|

||||

BenchmarkSum256_16 204.17 MB/s

|

||||

BenchmarkSum256_64 1040.63 MB/s

|

||||

BenchmarkSum256_1K 8653.30 MB/s

|

||||

BenchmarkSum256_8K 13476.07 MB/s

|

||||

BenchmarkSum256_1M 14928.71 MB/s

|

||||

BenchmarkSum256_5M 14180.04 MB/s

|

||||

BenchmarkSum256_10M 12458.65 MB/s

|

||||

BenchmarkSum256_25M 11927.25 MB/s

|

||||

```

|

||||

|

||||

So for moderately sized messages it tops out at about 15 GB/sec. Also for small messages (1K) the performance is already at approximately 60% of the maximum throughput.

|

||||

|

||||

### ARM Performance

|

||||

|

||||

Below are the single core results on an EC2 m6g.4xlarge (Graviton2) instance for 256 bit outputs:

|

||||

|

||||

```

|

||||

BenchmarkSum256_16 96.82 MB/s

|

||||

BenchmarkSum256_64 445.35 MB/s

|

||||

BenchmarkSum256_1K 2782.46 MB/s

|

||||

BenchmarkSum256_8K 4083.58 MB/s

|

||||

BenchmarkSum256_1M 4986.41 MB/s

|

||||

BenchmarkSum256_5M 4992.72 MB/s

|

||||

BenchmarkSum256_10M 4993.32 MB/s

|

||||

BenchmarkSum256_25M 4992.55 MB/s

|

||||

```

|

||||

|

||||

### ppc64le Performance

|

||||

|

||||

The ppc64le accelerated version is roughly 10x faster compared to the non-optimized version:

|

||||

|

||||

```

|

||||

benchmark old MB/s new MB/s speedup

|

||||

BenchmarkWrite_8K 531.19 5566.41 10.48x

|

||||

BenchmarkSum64_8K 518.86 4971.88 9.58x

|

||||

BenchmarkSum256_8K 502.45 4474.20 8.90x

|

||||

```

|

||||

|

||||

### Performance compared to other hashing techniques

|

||||

|

||||

On a Skylake CPU (3.0 GHz Xeon Platinum 8124M) the table below shows how HighwayHash compares to other hashing techniques for 5 MB messages (single core performance, all Golang implementations, see [benchmark](https://github.com/fwessels/HashCompare/blob/master/benchmarks_test.go)).

|

||||

|

||||

```

|

||||

BenchmarkHighwayHash 11986.98 MB/s

|

||||

BenchmarkSHA256_AVX512 3552.74 MB/s

|

||||

BenchmarkBlake2b 972.38 MB/s

|

||||

BenchmarkSHA1 950.64 MB/s

|

||||

BenchmarkMD5 684.18 MB/s

|

||||

BenchmarkSHA512 562.04 MB/s

|

||||

BenchmarkSHA256 383.07 MB/s

|

||||

```

|

||||

|

||||

*Note: the AVX512 version of SHA256 uses the [multi-buffer crypto library](https://github.com/intel/intel-ipsec-mb) technique as developed by Intel, more details can be found in [sha256-simd](https://github.com/minio/sha256-simd/).*

|

||||

|

||||

### Qualitative assessment

|

||||

|

||||

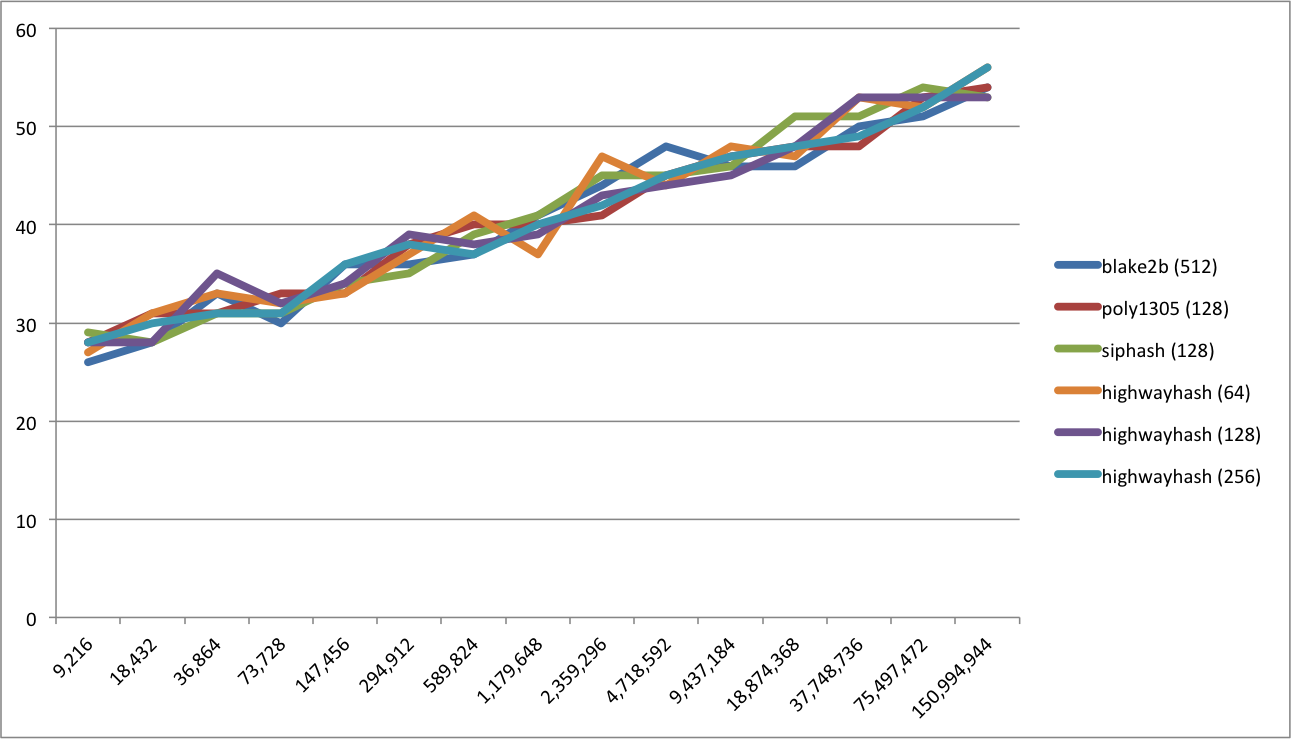

We have performed a 'qualitative' assessment of how HighwayHash compares to Blake2b in terms of the distribution of the checksums for varying numbers of messages. It shows that HighwayHash behaves similarly according to the following graph:

|

||||

|

||||

|

||||

|

||||

More information can be found in [HashCompare](https://github.com/fwessels/HashCompare).

|

||||

|

||||

### Requirements

|

||||

|

||||

All Go versions >= 1.11 are supported (needed for required assembly support for the different platforms).

|

||||

|

||||

### Contributing

|

||||

|

||||

Contributions are welcome, please send PRs for any enhancements.

|

||||

+225

@@ -0,0 +1,225 @@

|

||||

// Copyright (c) 2017 Minio Inc. All rights reserved.

|

||||

// Use of this source code is governed by a license that can be

|

||||

// found in the LICENSE file.

|

||||

|

||||

// Package highwayhash implements the pseudo-random-function (PRF) HighwayHash.

|

||||

// HighwayHash is a fast hash function designed to defend hash-flooding attacks

|

||||

// or to authenticate short-lived messages.

|

||||

//

|

||||

// HighwayHash is not a general purpose cryptographic hash function and does not

|

||||

// provide (strong) collision resistance.

|

||||

package highwayhash

|

||||

|

||||

import (

|

||||

"encoding/binary"

|

||||

"errors"

|

||||

"hash"

|

||||

)

|

||||

|

||||

const (

|

||||

// Size is the size of HighwayHash-256 checksum in bytes.

|

||||

Size = 32

|

||||

// Size128 is the size of HighwayHash-128 checksum in bytes.

|

||||

Size128 = 16

|

||||

// Size64 is the size of HighwayHash-64 checksum in bytes.

|

||||

Size64 = 8

|

||||

)

|

||||

|

||||

var errKeySize = errors.New("highwayhash: invalid key size")

|

||||

|

||||

// New returns a hash.Hash computing the HighwayHash-256 checksum.

|

||||

// It returns a non-nil error if the key is not 32 bytes long.

|

||||

func New(key []byte) (hash.Hash, error) {

|

||||

if len(key) != Size {

|

||||

return nil, errKeySize

|

||||

}

|

||||

h := &digest{size: Size}

|

||||

copy(h.key[:], key)

|

||||

h.Reset()

|

||||

return h, nil

|

||||

}

|

||||

|

||||

// New128 returns a hash.Hash computing the HighwayHash-128 checksum.

|

||||

// It returns a non-nil error if the key is not 32 bytes long.

|

||||

func New128(key []byte) (hash.Hash, error) {

|

||||

if len(key) != Size {

|

||||

return nil, errKeySize

|

||||

}

|

||||

h := &digest{size: Size128}

|

||||

copy(h.key[:], key)

|

||||

h.Reset()

|

||||

return h, nil

|

||||

}

|

||||

|

||||

// New64 returns a hash.Hash computing the HighwayHash-64 checksum.

|

||||

// It returns a non-nil error if the key is not 32 bytes long.

|

||||

func New64(key []byte) (hash.Hash64, error) {

|

||||

if len(key) != Size {

|

||||

return nil, errKeySize

|

||||

}

|

||||

h := new(digest64)

|

||||

h.size = Size64

|

||||

copy(h.key[:], key)

|

||||

h.Reset()

|

||||

return h, nil

|

||||

}

|

||||

|

||||

// Sum computes the HighwayHash-256 checksum of data.

|

||||

// It panics if the key is not 32 bytes long.

|

||||

func Sum(data, key []byte) [Size]byte {

|

||||

if len(key) != Size {

|

||||

panic(errKeySize)

|

||||

}

|

||||

var state [16]uint64

|

||||

initialize(&state, key)

|

||||

if n := len(data) & (^(Size - 1)); n > 0 {

|

||||

update(&state, data[:n])

|

||||

data = data[n:]

|

||||

}

|

||||

if len(data) > 0 {

|

||||

var block [Size]byte

|

||||

offset := copy(block[:], data)

|

||||

hashBuffer(&state, &block, offset)

|

||||

}

|

||||

var hash [Size]byte

|

||||

finalize(hash[:], &state)

|

||||

return hash

|

||||

}

|

||||

|

||||

// Sum128 computes the HighwayHash-128 checksum of data.

|

||||

// It panics if the key is not 32 bytes long.

|

||||

func Sum128(data, key []byte) [Size128]byte {

|

||||

if len(key) != Size {

|

||||

panic(errKeySize)

|

||||

}

|

||||

var state [16]uint64

|

||||

initialize(&state, key)

|

||||

if n := len(data) & (^(Size - 1)); n > 0 {

|

||||

update(&state, data[:n])

|

||||

data = data[n:]

|

||||

}

|

||||

if len(data) > 0 {

|

||||

var block [Size]byte

|

||||

offset := copy(block[:], data)

|

||||

hashBuffer(&state, &block, offset)

|

||||

}

|

||||

var hash [Size128]byte

|

||||

finalize(hash[:], &state)

|

||||

return hash

|

||||

}

|

||||

|

||||

// Sum64 computes the HighwayHash-64 checksum of data.

|

||||

// It panics if the key is not 32 bytes long.

|

||||

func Sum64(data, key []byte) uint64 {

|

||||

if len(key) != Size {

|

||||

panic(errKeySize)

|

||||

}

|

||||

var state [16]uint64

|

||||

initialize(&state, key)

|

||||

if n := len(data) & (^(Size - 1)); n > 0 {

|

||||

update(&state, data[:n])

|

||||

data = data[n:]

|

||||

}

|

||||

if len(data) > 0 {

|

||||

var block [Size]byte

|

||||

offset := copy(block[:], data)

|

||||

hashBuffer(&state, &block, offset)

|

||||

}

|

||||

var hash [Size64]byte

|

||||

finalize(hash[:], &state)

|

||||

return binary.LittleEndian.Uint64(hash[:])

|

||||

}

|

||||

|

||||

type digest64 struct{ digest }

|

||||

|

||||

func (d *digest64) Sum64() uint64 {

|

||||

state := d.state

|

||||

if d.offset > 0 {

|

||||

hashBuffer(&state, &d.buffer, d.offset)

|

||||

}

|

||||

var hash [8]byte

|

||||

finalize(hash[:], &state)

|

||||

return binary.LittleEndian.Uint64(hash[:])

|

||||

}

|

||||

|

||||

type digest struct {

|

||||

state [16]uint64 // v0 | v1 | mul0 | mul1

|

||||

|

||||

key, buffer [Size]byte

|

||||

offset int

|

||||

|

||||

size int

|

||||

}

|

||||

|

||||

func (d *digest) Size() int { return d.size }

|

||||

|

||||

func (d *digest) BlockSize() int { return Size }

|

||||

|

||||

func (d *digest) Reset() {

|

||||

initialize(&d.state, d.key[:])

|

||||

d.offset = 0

|

||||

}

|

||||

|

||||

func (d *digest) Write(p []byte) (n int, err error) {

|

||||

n = len(p)

|

||||

if d.offset > 0 {

|

||||

remaining := Size - d.offset

|

||||

if n < remaining {

|

||||

d.offset += copy(d.buffer[d.offset:], p)

|

||||

return

|

||||

}

|

||||

copy(d.buffer[d.offset:], p[:remaining])

|

||||

update(&d.state, d.buffer[:])

|

||||

p = p[remaining:]

|

||||

d.offset = 0

|

||||

}

|

||||

if nn := len(p) & (^(Size - 1)); nn > 0 {

|

||||

update(&d.state, p[:nn])

|

||||

p = p[nn:]

|

||||

}

|

||||

if len(p) > 0 {

|

||||

d.offset = copy(d.buffer[d.offset:], p)

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

func (d *digest) Sum(b []byte) []byte {

|

||||

state := d.state

|

||||

if d.offset > 0 {

|

||||

hashBuffer(&state, &d.buffer, d.offset)

|

||||

}

|

||||

var hash [Size]byte

|

||||

finalize(hash[:d.size], &state)

|

||||

return append(b, hash[:d.size]...)

|

||||

}

|

||||

|

||||

func hashBuffer(state *[16]uint64, buffer *[32]byte, offset int) {

|

||||

var block [Size]byte

|

||||

mod32 := (uint64(offset) << 32) + uint64(offset)

|

||||

for i := range state[:4] {

|

||||

state[i] += mod32

|

||||

}

|

||||

for i := range state[4:8] {

|

||||

t0 := uint32(state[i+4])

|

||||

t0 = (t0 << uint(offset)) | (t0 >> uint(32-offset))

|

||||

|

||||

t1 := uint32(state[i+4] >> 32)

|

||||

t1 = (t1 << uint(offset)) | (t1 >> uint(32-offset))

|

||||

|

||||

state[i+4] = (uint64(t1) << 32) | uint64(t0)

|

||||

}

|

||||

|

||||

mod4 := offset & 3

|

||||

remain := offset - mod4

|

||||

|

||||

copy(block[:], buffer[:remain])

|

||||

if offset >= 16 {

|

||||

copy(block[28:], buffer[offset-4:])

|

||||

} else if mod4 != 0 {

|

||||

last := uint32(buffer[remain])

|

||||

last += uint32(buffer[remain+mod4>>1]) << 8

|

||||

last += uint32(buffer[offset-1]) << 16

|

||||

binary.LittleEndian.PutUint32(block[16:], last)

|

||||

}

|

||||

update(state, block[:])

|

||||

}

|

||||

+248

@@ -0,0 +1,248 @@

|

||||

// Copyright (c) 2017 Minio Inc. All rights reserved.

|

||||

// Use of this source code is governed by a license that can be

|

||||

// found in the LICENSE file.

|

||||

|

||||

// +build amd64,!gccgo,!appengine,!nacl,!noasm

|

||||

|

||||

#include "textflag.h"

|

||||

|

||||

DATA ·consAVX2<>+0x00(SB)/8, $0xdbe6d5d5fe4cce2f

|

||||

DATA ·consAVX2<>+0x08(SB)/8, $0xa4093822299f31d0

|

||||

DATA ·consAVX2<>+0x10(SB)/8, $0x13198a2e03707344

|

||||

DATA ·consAVX2<>+0x18(SB)/8, $0x243f6a8885a308d3

|

||||

DATA ·consAVX2<>+0x20(SB)/8, $0x3bd39e10cb0ef593

|

||||

DATA ·consAVX2<>+0x28(SB)/8, $0xc0acf169b5f18a8c

|

||||

DATA ·consAVX2<>+0x30(SB)/8, $0xbe5466cf34e90c6c

|

||||

DATA ·consAVX2<>+0x38(SB)/8, $0x452821e638d01377

|

||||

GLOBL ·consAVX2<>(SB), (NOPTR+RODATA), $64

|

||||

|

||||

DATA ·zipperMergeAVX2<>+0x00(SB)/8, $0xf010e05020c03

|

||||

DATA ·zipperMergeAVX2<>+0x08(SB)/8, $0x70806090d0a040b

|

||||

DATA ·zipperMergeAVX2<>+0x10(SB)/8, $0xf010e05020c03

|

||||

DATA ·zipperMergeAVX2<>+0x18(SB)/8, $0x70806090d0a040b

|

||||

GLOBL ·zipperMergeAVX2<>(SB), (NOPTR+RODATA), $32

|

||||

|

||||

#define REDUCE_MOD(x0, x1, x2, x3, tmp0, tmp1, y0, y1) \

|

||||

MOVQ $0x3FFFFFFFFFFFFFFF, tmp0 \

|

||||

ANDQ tmp0, x3 \

|

||||

MOVQ x2, y0 \

|

||||

MOVQ x3, y1 \

|

||||

\

|

||||

MOVQ x2, tmp0 \

|

||||

MOVQ x3, tmp1 \

|

||||

SHLQ $1, tmp1 \

|

||||

SHRQ $63, tmp0 \

|

||||

MOVQ tmp1, x3 \

|

||||

ORQ tmp0, x3 \

|

||||

\

|

||||

SHLQ $1, x2 \

|

||||

\

|

||||

MOVQ y0, tmp0 \

|

||||

MOVQ y1, tmp1 \

|

||||

SHLQ $2, tmp1 \

|

||||

SHRQ $62, tmp0 \

|

||||

MOVQ tmp1, y1 \

|

||||

ORQ tmp0, y1 \

|

||||

\

|

||||

SHLQ $2, y0 \

|

||||

\

|

||||

XORQ x0, y0 \

|

||||

XORQ x2, y0 \

|

||||

XORQ x1, y1 \

|

||||

XORQ x3, y1

|

||||

|

||||

#define UPDATE(msg) \

|

||||

VPADDQ msg, Y2, Y2 \

|

||||

VPADDQ Y3, Y2, Y2 \

|

||||

\

|

||||

VPSRLQ $32, Y1, Y0 \

|

||||

BYTE $0xC5; BYTE $0xFD; BYTE $0xF4; BYTE $0xC2 \ // VPMULUDQ Y2, Y0, Y0

|

||||

VPXOR Y0, Y3, Y3 \

|

||||

\

|

||||

VPADDQ Y4, Y1, Y1 \

|

||||

\

|

||||

VPSRLQ $32, Y2, Y0 \

|

||||

BYTE $0xC5; BYTE $0xFD; BYTE $0xF4; BYTE $0xC1 \ // VPMULUDQ Y1, Y0, Y0

|

||||

VPXOR Y0, Y4, Y4 \

|

||||

\

|

||||

VPSHUFB Y5, Y2, Y0 \

|

||||

VPADDQ Y0, Y1, Y1 \

|

||||

\

|

||||

VPSHUFB Y5, Y1, Y0 \

|

||||

VPADDQ Y0, Y2, Y2

|

||||

|

||||

// func initializeAVX2(state *[16]uint64, key []byte)

|

||||

TEXT ·initializeAVX2(SB), 4, $0-32

|

||||

MOVQ state+0(FP), AX

|

||||

MOVQ key_base+8(FP), BX

|

||||

MOVQ $·consAVX2<>(SB), CX

|

||||

|

||||

VMOVDQU 0(BX), Y1

|

||||

VPSHUFD $177, Y1, Y2

|

||||

|

||||

VMOVDQU 0(CX), Y3

|

||||

VMOVDQU 32(CX), Y4

|

||||

|

||||

VPXOR Y3, Y1, Y1

|

||||

VPXOR Y4, Y2, Y2

|

||||

|

||||

VMOVDQU Y1, 0(AX)

|

||||

VMOVDQU Y2, 32(AX)

|

||||

VMOVDQU Y3, 64(AX)

|

||||

VMOVDQU Y4, 96(AX)

|

||||

VZEROUPPER

|

||||

RET

|

||||

|

||||

// func updateAVX2(state *[16]uint64, msg []byte)

|

||||

TEXT ·updateAVX2(SB), 4, $0-32

|

||||

MOVQ state+0(FP), AX

|

||||

MOVQ msg_base+8(FP), BX

|

||||

MOVQ msg_len+16(FP), CX

|

||||

|

||||

CMPQ CX, $32

|

||||

JB DONE

|

||||

|

||||

VMOVDQU 0(AX), Y1

|

||||

VMOVDQU 32(AX), Y2

|

||||

VMOVDQU 64(AX), Y3

|

||||

VMOVDQU 96(AX), Y4

|

||||

|

||||

VMOVDQU ·zipperMergeAVX2<>(SB), Y5

|

||||

|

||||

LOOP:

|

||||

VMOVDQU 0(BX), Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

ADDQ $32, BX

|

||||

SUBQ $32, CX

|

||||

JA LOOP

|

||||

|

||||

VMOVDQU Y1, 0(AX)

|

||||

VMOVDQU Y2, 32(AX)

|

||||

VMOVDQU Y3, 64(AX)

|

||||

VMOVDQU Y4, 96(AX)

|

||||

VZEROUPPER

|

||||

|

||||

DONE:

|

||||

RET

|

||||

|

||||

// func finalizeAVX2(out []byte, state *[16]uint64)

|

||||

TEXT ·finalizeAVX2(SB), 4, $0-32

|

||||

MOVQ state+24(FP), AX

|

||||

MOVQ out_base+0(FP), BX

|

||||

MOVQ out_len+8(FP), CX

|

||||

|

||||

VMOVDQU 0(AX), Y1

|

||||

VMOVDQU 32(AX), Y2

|

||||

VMOVDQU 64(AX), Y3

|

||||

VMOVDQU 96(AX), Y4

|

||||

|

||||

VMOVDQU ·zipperMergeAVX2<>(SB), Y5

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

CMPQ CX, $8

|

||||

JE skipUpdate // Just 4 rounds for 64-bit checksum

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

CMPQ CX, $16

|

||||

JE skipUpdate // 6 rounds for 128-bit checksum

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

VPERM2I128 $1, Y1, Y1, Y0

|

||||

VPSHUFD $177, Y0, Y0

|

||||

UPDATE(Y0)

|

||||

|

||||

skipUpdate:

|

||||

VMOVDQU Y1, 0(AX)

|

||||

VMOVDQU Y2, 32(AX)

|

||||

VMOVDQU Y3, 64(AX)

|

||||

VMOVDQU Y4, 96(AX)

|

||||

VZEROUPPER

|

||||

|

||||

CMPQ CX, $8

|

||||

JE hash64

|

||||

CMPQ CX, $16

|

||||

JE hash128

|

||||

|

||||

// 256-bit checksum

|

||||

MOVQ 0*8(AX), R8

|

||||

MOVQ 1*8(AX), R9

|

||||

MOVQ 4*8(AX), R10

|

||||

MOVQ 5*8(AX), R11

|

||||

ADDQ 8*8(AX), R8

|

||||

ADDQ 9*8(AX), R9

|

||||

ADDQ 12*8(AX), R10

|

||||

ADDQ 13*8(AX), R11

|

||||

|

||||

REDUCE_MOD(R8, R9, R10, R11, R12, R13, R14, R15)

|

||||

MOVQ R14, 0(BX)

|

||||

MOVQ R15, 8(BX)

|

||||

|

||||

MOVQ 2*8(AX), R8

|

||||

MOVQ 3*8(AX), R9

|

||||

MOVQ 6*8(AX), R10

|

||||

MOVQ 7*8(AX), R11

|

||||

ADDQ 10*8(AX), R8

|

||||

ADDQ 11*8(AX), R9

|

||||

ADDQ 14*8(AX), R10

|

||||

ADDQ 15*8(AX), R11

|

||||

|

||||

REDUCE_MOD(R8, R9, R10, R11, R12, R13, R14, R15)

|

||||

MOVQ R14, 16(BX)

|

||||

MOVQ R15, 24(BX)

|

||||

RET

|

||||

|

||||

hash128:

|

||||

MOVQ 0*8(AX), R8

|

||||

MOVQ 1*8(AX), R9

|

||||

ADDQ 6*8(AX), R8

|

||||

ADDQ 7*8(AX), R9

|

||||

ADDQ 8*8(AX), R8

|

||||

ADDQ 9*8(AX), R9

|

||||

ADDQ 14*8(AX), R8

|

||||

ADDQ 15*8(AX), R9

|

||||

MOVQ R8, 0(BX)

|

||||

MOVQ R9, 8(BX)

|

||||

RET

|

||||

|

||||

hash64:

|

||||

MOVQ 0*8(AX), DX

|

||||

ADDQ 4*8(AX), DX

|

||||

ADDQ 8*8(AX), DX

|

||||

ADDQ 12*8(AX), DX

|

||||

MOVQ DX, 0(BX)

|

||||

RET

|

||||

|

||||

+67

@@ -0,0 +1,67 @@

|

||||

// Copyright (c) 2017 Minio Inc. All rights reserved.

|

||||

// Use of this source code is governed by a license that can be

|

||||

// found in the LICENSE file.

|

||||

|

||||

// +build amd64,!gccgo,!appengine,!nacl,!noasm

|

||||

|

||||

package highwayhash

|

||||

|

||||

import "golang.org/x/sys/cpu"

|

||||

|

||||

var (

|

||||

useSSE4 = cpu.X86.HasSSE41

|

||||

useAVX2 = cpu.X86.HasAVX2

|

||||

useNEON = false

|

||||

useVMX = false

|

||||

)

|

||||

|

||||

//go:noescape

|

||||

func initializeSSE4(state *[16]uint64, key []byte)

|

||||

|

||||

//go:noescape

|

||||

func initializeAVX2(state *[16]uint64, key []byte)

|

||||

|

||||

//go:noescape

|

||||

func updateSSE4(state *[16]uint64, msg []byte)

|

||||

|

||||

//go:noescape

|

||||

func updateAVX2(state *[16]uint64, msg []byte)

|

||||

|

||||

//go:noescape

|

||||

func finalizeSSE4(out []byte, state *[16]uint64)

|

||||

|

||||

//go:noescape

|

||||

func finalizeAVX2(out []byte, state *[16]uint64)

|

||||

|

||||

func initialize(state *[16]uint64, key []byte) {

|

||||

switch {

|

||||

case useAVX2:

|

||||

initializeAVX2(state, key)

|

||||

case useSSE4:

|

||||

initializeSSE4(state, key)

|

||||

default:

|

||||

initializeGeneric(state, key)

|

||||

}

|

||||

}

|

||||

|

||||

func update(state *[16]uint64, msg []byte) {

|

||||

switch {

|

||||

case useAVX2:

|

||||

updateAVX2(state, msg)

|

||||

case useSSE4:

|

||||

updateSSE4(state, msg)

|

||||

default:

|

||||

updateGeneric(state, msg)

|

||||

}

|

||||

}

|

||||

|

||||

func finalize(out []byte, state *[16]uint64) {

|

||||

switch {

|

||||

case useAVX2:

|

||||

finalizeAVX2(out, state)

|

||||

case useSSE4:

|

||||

finalizeSSE4(out, state)

|

||||

default:

|

||||

finalizeGeneric(out, state)

|

||||

}

|

||||

}

|

||||

+294

@@ -0,0 +1,294 @@

|

||||

// Copyright (c) 2017 Minio Inc. All rights reserved.

|

||||

// Use of this source code is governed by a license that can be

|

||||

// found in the LICENSE file.

|

||||

|

||||

// +build amd64 !gccgo !appengine !nacl

|

||||

|

||||

#include "textflag.h"

|

||||

|

||||

DATA ·asmConstants<>+0x00(SB)/8, $0xdbe6d5d5fe4cce2f

|

||||

DATA ·asmConstants<>+0x08(SB)/8, $0xa4093822299f31d0

|

||||

DATA ·asmConstants<>+0x10(SB)/8, $0x13198a2e03707344

|

||||

DATA ·asmConstants<>+0x18(SB)/8, $0x243f6a8885a308d3

|

||||

DATA ·asmConstants<>+0x20(SB)/8, $0x3bd39e10cb0ef593

|

||||

DATA ·asmConstants<>+0x28(SB)/8, $0xc0acf169b5f18a8c

|

||||

DATA ·asmConstants<>+0x30(SB)/8, $0xbe5466cf34e90c6c

|

||||

DATA ·asmConstants<>+0x38(SB)/8, $0x452821e638d01377

|

||||

GLOBL ·asmConstants<>(SB), (NOPTR+RODATA), $64

|

||||

|

||||

DATA ·asmZipperMerge<>+0x00(SB)/8, $0xf010e05020c03

|

||||

DATA ·asmZipperMerge<>+0x08(SB)/8, $0x70806090d0a040b

|

||||

GLOBL ·asmZipperMerge<>(SB), (NOPTR+RODATA), $16

|

||||

|

||||

#define v00 X0

|

||||

#define v01 X1

|

||||

#define v10 X2

|

||||

#define v11 X3

|

||||

#define m00 X4

|

||||

#define m01 X5

|

||||

#define m10 X6

|

||||

#define m11 X7

|

||||

|

||||

#define t0 X8

|

||||

#define t1 X9

|

||||

#define t2 X10

|

||||

|

||||

#define REDUCE_MOD(x0, x1, x2, x3, tmp0, tmp1, y0, y1) \

|

||||

MOVQ $0x3FFFFFFFFFFFFFFF, tmp0 \

|

||||

ANDQ tmp0, x3 \

|

||||

MOVQ x2, y0 \

|

||||

MOVQ x3, y1 \

|

||||

\

|

||||

MOVQ x2, tmp0 \

|

||||

MOVQ x3, tmp1 \

|

||||

SHLQ $1, tmp1 \

|

||||

SHRQ $63, tmp0 \

|

||||

MOVQ tmp1, x3 \

|

||||

ORQ tmp0, x3 \

|

||||

\

|

||||

SHLQ $1, x2 \

|

||||

\

|

||||

MOVQ y0, tmp0 \

|

||||

MOVQ y1, tmp1 \

|

||||

SHLQ $2, tmp1 \

|

||||

SHRQ $62, tmp0 \

|

||||

MOVQ tmp1, y1 \

|

||||

ORQ tmp0, y1 \

|

||||

\

|

||||

SHLQ $2, y0 \

|

||||

\

|

||||

XORQ x0, y0 \

|

||||

XORQ x2, y0 \

|

||||

XORQ x1, y1 \

|

||||

XORQ x3, y1

|

||||

|

||||

#define UPDATE(msg0, msg1) \

|

||||

PADDQ msg0, v10 \

|

||||

PADDQ m00, v10 \

|

||||

PADDQ msg1, v11 \

|

||||

PADDQ m01, v11 \

|

||||

\

|

||||

MOVO v00, t0 \

|

||||

MOVO v01, t1 \

|

||||

PSRLQ $32, t0 \

|

||||

PSRLQ $32, t1 \

|

||||

PMULULQ v10, t0 \

|

||||

PMULULQ v11, t1 \

|

||||

PXOR t0, m00 \

|

||||

PXOR t1, m01 \

|

||||

\

|

||||

PADDQ m10, v00 \

|

||||

PADDQ m11, v01 \

|

||||

\

|

||||

MOVO v10, t0 \

|

||||

MOVO v11, t1 \

|

||||

PSRLQ $32, t0 \

|

||||

PSRLQ $32, t1 \

|

||||

PMULULQ v00, t0 \

|

||||

PMULULQ v01, t1 \

|

||||

PXOR t0, m10 \

|

||||

PXOR t1, m11 \

|

||||

\

|

||||

MOVO v10, t0 \

|

||||

PSHUFB t2, t0 \

|

||||

MOVO v11, t1 \

|

||||

PSHUFB t2, t1 \

|

||||

PADDQ t0, v00 \

|

||||

PADDQ t1, v01 \

|

||||

\

|

||||

MOVO v00, t0 \

|

||||

PSHUFB t2, t0 \

|

||||

MOVO v01, t1 \

|

||||

PSHUFB t2, t1 \

|

||||

PADDQ t0, v10 \

|

||||

PADDQ t1, v11

|

||||

|

||||

// func initializeSSE4(state *[16]uint64, key []byte)

|

||||

TEXT ·initializeSSE4(SB), NOSPLIT, $0-32

|

||||

MOVQ state+0(FP), AX

|

||||

MOVQ key_base+8(FP), BX

|

||||

MOVQ $·asmConstants<>(SB), CX

|

||||

|

||||

MOVOU 0(BX), v00

|

||||

MOVOU 16(BX), v01

|

||||

|

||||

PSHUFD $177, v00, v10

|

||||

PSHUFD $177, v01, v11

|

||||

|

||||

MOVOU 0(CX), m00

|

||||

MOVOU 16(CX), m01

|

||||

MOVOU 32(CX), m10

|

||||

MOVOU 48(CX), m11

|

||||

|

||||

PXOR m00, v00

|

||||

PXOR m01, v01

|

||||

PXOR m10, v10

|

||||

PXOR m11, v11

|

||||

|

||||

MOVOU v00, 0(AX)

|

||||

MOVOU v01, 16(AX)

|

||||

MOVOU v10, 32(AX)

|

||||

MOVOU v11, 48(AX)

|

||||

MOVOU m00, 64(AX)

|

||||

MOVOU m01, 80(AX)

|

||||

MOVOU m10, 96(AX)

|

||||

MOVOU m11, 112(AX)

|

||||

RET

|

||||

|

||||

// func updateSSE4(state *[16]uint64, msg []byte)

|

||||

TEXT ·updateSSE4(SB), NOSPLIT, $0-32

|

||||

MOVQ state+0(FP), AX

|

||||

MOVQ msg_base+8(FP), BX

|

||||

MOVQ msg_len+16(FP), CX

|

||||

|

||||

CMPQ CX, $32

|

||||

JB DONE

|

||||

|

||||

MOVOU 0(AX), v00

|

||||

MOVOU 16(AX), v01

|

||||

MOVOU 32(AX), v10

|

||||

MOVOU 48(AX), v11

|

||||

MOVOU 64(AX), m00

|

||||

MOVOU 80(AX), m01

|

||||

MOVOU 96(AX), m10

|

||||

MOVOU 112(AX), m11

|

||||

|

||||

MOVOU ·asmZipperMerge<>(SB), t2

|

||||

|

||||

LOOP:

|

||||

MOVOU 0(BX), t0

|

||||

MOVOU 16(BX), t1

|

||||

|

||||

UPDATE(t0, t1)

|

||||

|

||||

ADDQ $32, BX

|

||||

SUBQ $32, CX

|

||||

JA LOOP

|

||||

|

||||

MOVOU v00, 0(AX)

|

||||

MOVOU v01, 16(AX)

|

||||

MOVOU v10, 32(AX)

|

||||

MOVOU v11, 48(AX)

|

||||

MOVOU m00, 64(AX)

|

||||

MOVOU m01, 80(AX)

|

||||

MOVOU m10, 96(AX)

|

||||

MOVOU m11, 112(AX)

|

||||

|

||||

DONE:

|

||||

RET

|

||||

|

||||

// func finalizeSSE4(out []byte, state *[16]uint64)

|

||||

TEXT ·finalizeSSE4(SB), NOSPLIT, $0-32

|

||||

MOVQ state+24(FP), AX

|

||||

MOVQ out_base+0(FP), BX

|

||||

MOVQ out_len+8(FP), CX

|

||||

|

||||

MOVOU 0(AX), v00

|

||||

MOVOU 16(AX), v01

|

||||

MOVOU 32(AX), v10

|

||||

MOVOU 48(AX), v11

|

||||

MOVOU 64(AX), m00

|

||||

MOVOU 80(AX), m01

|

||||

MOVOU 96(AX), m10

|

||||

MOVOU 112(AX), m11

|

||||

|

||||

MOVOU ·asmZipperMerge<>(SB), t2

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

CMPQ CX, $8

|

||||

JE skipUpdate // Just 4 rounds for 64-bit checksum

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

CMPQ CX, $16

|

||||

JE skipUpdate // 6 rounds for 128-bit checksum

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

PSHUFD $177, v01, t0

|

||||

PSHUFD $177, v00, t1

|

||||

UPDATE(t0, t1)

|

||||

|

||||

skipUpdate:

|

||||

MOVOU v00, 0(AX)

|

||||

MOVOU v01, 16(AX)

|

||||

MOVOU v10, 32(AX)

|

||||

MOVOU v11, 48(AX)

|

||||

MOVOU m00, 64(AX)

|

||||

MOVOU m01, 80(AX)

|

||||

MOVOU m10, 96(AX)

|

||||

MOVOU m11, 112(AX)

|

||||

|

||||

CMPQ CX, $8

|

||||

JE hash64

|

||||

CMPQ CX, $16

|

||||

JE hash128

|

||||

|

||||

// 256-bit checksum

|

||||

PADDQ v00, m00

|

||||

PADDQ v10, m10

|

||||

PADDQ v01, m01

|

||||

PADDQ v11, m11

|

||||

|

||||

MOVQ m00, R8

|

||||

PEXTRQ $1, m00, R9

|

||||

MOVQ m10, R10

|

||||

PEXTRQ $1, m10, R11

|

||||

REDUCE_MOD(R8, R9, R10, R11, R12, R13, R14, R15)

|

||||

MOVQ R14, 0(BX)

|

||||

MOVQ R15, 8(BX)

|

||||

|

||||

MOVQ m01, R8

|

||||

PEXTRQ $1, m01, R9

|

||||

MOVQ m11, R10

|

||||

PEXTRQ $1, m11, R11

|

||||

REDUCE_MOD(R8, R9, R10, R11, R12, R13, R14, R15)

|

||||

MOVQ R14, 16(BX)

|

||||

MOVQ R15, 24(BX)

|

||||

RET

|

||||

|

||||

hash128:

|

||||

PADDQ v00, v11

|

||||

PADDQ m00, m11

|

||||

PADDQ v11, m11

|

||||

MOVOU m11, 0(BX)

|

||||

RET

|

||||

|

||||

hash64:

|

||||

PADDQ v00, v10

|

||||

PADDQ m00, m10

|

||||

PADDQ v10, m10

|

||||

MOVQ m10, DX

|

||||

MOVQ DX, 0(BX)

|

||||

RET

|

||||

+47

@@ -0,0 +1,47 @@

|

||||

// Copyright (c) 2017 Minio Inc. All rights reserved.

|

||||

// Use of this source code is governed by a license that can be

|

||||

// found in the LICENSE file.

|

||||

|

||||

//+build !noasm,!appengine

|

||||

|

||||

package highwayhash

|

||||

|

||||

var (

|

||||

useSSE4 = false

|

||||

useAVX2 = false

|

||||

useNEON = true

|

||||

useVMX = false

|

||||

)

|

||||

|

||||

//go:noescape

|

||||

func initializeArm64(state *[16]uint64, key []byte)

|

||||

|

||||

//go:noescape

|

||||

func updateArm64(state *[16]uint64, msg []byte)

|

||||

|

||||

//go:noescape

|

||||

func finalizeArm64(out []byte, state *[16]uint64)

|

||||

|

||||

func initialize(state *[16]uint64, key []byte) {

|

||||

if useNEON {

|

||||

initializeArm64(state, key)

|

||||

} else {

|

||||

initializeGeneric(state, key)

|

||||

}

|

||||

}

|

||||

|

||||

func update(state *[16]uint64, msg []byte) {

|

||||

if useNEON {

|

||||

updateArm64(state, msg)

|

||||

} else {

|

||||

updateGeneric(state, msg)

|

||||

}

|

||||

}

|

||||

|

||||

func finalize(out []byte, state *[16]uint64) {

|

||||

if useNEON {

|

||||

finalizeArm64(out, state)

|

||||

} else {

|

||||

finalizeGeneric(out, state)

|

||||

}

|

||||

}

|

||||

+324

@@ -0,0 +1,324 @@

|

||||

//

|

||||

// Minio Cloud Storage, (C) 2017 Minio, Inc.

|

||||

//

|

||||

// Licensed under the Apache License, Version 2.0 (the "License");

|

||||

// you may not use this file except in compliance with the License.

|

||||

// You may obtain a copy of the License at

|

||||

//

|

||||

// http://www.apache.org/licenses/LICENSE-2.0

|

||||

//

|

||||

// Unless required by applicable law or agreed to in writing, software

|

||||

// distributed under the License is distributed on an "AS IS" BASIS,

|

||||

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

// See the License for the specific language governing permissions and

|

||||

// limitations under the License.

|

||||

//

|

||||

|

||||

//+build !noasm,!appengine

|

||||

|

||||

// Use github.com/minio/asm2plan9s on this file to assemble ARM instructions to

|

||||

// the opcodes of their Plan9 equivalents

|

||||

|

||||

#include "textflag.h"

|

||||

|

||||

#define REDUCE_MOD(x0, x1, x2, x3, tmp0, tmp1, y0, y1) \

|

||||

MOVD $0x3FFFFFFFFFFFFFFF, tmp0 \

|

||||

AND tmp0, x3 \

|

||||

MOVD x2, y0 \

|

||||

MOVD x3, y1 \

|

||||

\

|

||||

MOVD x2, tmp0 \

|

||||

MOVD x3, tmp1 \

|

||||

LSL $1, tmp1 \

|

||||

LSR $63, tmp0 \

|

||||

MOVD tmp1, x3 \

|

||||

ORR tmp0, x3 \

|

||||

\

|

||||

LSL $1, x2 \

|

||||

\

|

||||

MOVD y0, tmp0 \

|

||||

MOVD y1, tmp1 \

|

||||

LSL $2, tmp1 \

|

||||

LSR $62, tmp0 \

|

||||

MOVD tmp1, y1 \

|

||||

ORR tmp0, y1 \

|

||||

\

|

||||

LSL $2, y0 \

|

||||

\

|

||||

EOR x0, y0 \

|

||||

EOR x2, y0 \

|

||||

EOR x1, y1 \

|

||||

EOR x3, y1

|

||||

|

||||

#define UPDATE(MSG1, MSG2) \

|

||||

\ // Add message

|

||||

VADD MSG1.D2, V2.D2, V2.D2 \

|

||||

VADD MSG2.D2, V3.D2, V3.D2 \

|

||||

\

|

||||

\ // v1 += mul0

|

||||

VADD V4.D2, V2.D2, V2.D2 \

|

||||

VADD V5.D2, V3.D2, V3.D2 \

|

||||

\

|

||||

\ // First pair of multiplies

|

||||

VTBL V29.B16, [V0.B16, V1.B16], V10.B16 \

|

||||

VTBL V30.B16, [V2.B16, V3.B16], V11.B16 \

|

||||

\

|

||||

\ // VUMULL V10.S2, V11.S2, V12.D2 /* assembler support missing */

|

||||

\ // VUMULL2 V10.S4, V11.S4, V13.D2 /* assembler support missing */

|

||||

WORD $0x2eaac16c \ // umull v12.2d, v11.2s, v10.2s

|

||||

WORD $0x6eaac16d \ // umull2 v13.2d, v11.4s, v10.4s

|

||||

\

|

||||

\ // v0 += mul1

|

||||

VADD V6.D2, V0.D2, V0.D2 \

|

||||

VADD V7.D2, V1.D2, V1.D2 \

|

||||

\

|

||||

\ // Second pair of multiplies

|

||||

VTBL V29.B16, [V2.B16, V3.B16], V15.B16 \

|

||||

VTBL V30.B16, [V0.B16, V1.B16], V14.B16 \

|

||||

\

|

||||

\ // EOR multiplication result in

|

||||

VEOR V12.B16, V4.B16, V4.B16 \

|

||||

VEOR V13.B16, V5.B16, V5.B16 \

|

||||

\

|

||||

\ // VUMULL V14.S2, V15.S2, V16.D2 /* assembler support missing */

|

||||

\ // VUMULL2 V14.S4, V15.S4, V17.D2 /* assembler support missing */

|

||||

WORD $0x2eaec1f0 \ // umull v16.2d, v15.2s, v14.2s

|

||||

WORD $0x6eaec1f1 \ // umull2 v17.2d, v15.4s, v14.4s

|

||||

\

|

||||

\ // First pair of zipper-merges

|

||||

VTBL V28.B16, [V2.B16], V18.B16 \

|

||||

VADD V18.D2, V0.D2, V0.D2 \

|

||||

VTBL V28.B16, [V3.B16], V19.B16 \

|

||||

VADD V19.D2, V1.D2, V1.D2 \

|

||||

\

|

||||

\ // Second pair of zipper-merges

|

||||

VTBL V28.B16, [V0.B16], V20.B16 \

|

||||

VADD V20.D2, V2.D2, V2.D2 \

|

||||

VTBL V28.B16, [V1.B16], V21.B16 \

|

||||

VADD V21.D2, V3.D2, V3.D2 \

|

||||

\

|

||||

\ // EOR multiplication result in

|

||||

VEOR V16.B16, V6.B16, V6.B16 \

|

||||

VEOR V17.B16, V7.B16, V7.B16

|

||||

|

||||

// func initializeArm64(state *[16]uint64, key []byte)

|

||||

TEXT ·initializeArm64(SB), NOSPLIT, $0

|

||||

MOVD state+0(FP), R0

|

||||

MOVD key_base+8(FP), R1

|

||||

|

||||

VLD1 (R1), [V1.S4, V2.S4]

|

||||

|

||||

VREV64 V1.S4, V3.S4

|

||||

VREV64 V2.S4, V4.S4

|

||||

|

||||

MOVD $·asmConstants(SB), R3

|

||||

VLD1 (R3), [V5.S4, V6.S4, V7.S4, V8.S4]

|

||||

VEOR V5.B16, V1.B16, V1.B16

|

||||

VEOR V6.B16, V2.B16, V2.B16

|

||||

VEOR V7.B16, V3.B16, V3.B16

|

||||

VEOR V8.B16, V4.B16, V4.B16

|

||||

|

||||

VST1.P [V1.D2, V2.D2, V3.D2, V4.D2], 64(R0)

|

||||

VST1 [V5.D2, V6.D2, V7.D2, V8.D2], (R0)

|

||||

RET

|

||||

|

||||

TEXT ·updateArm64(SB), NOSPLIT, $0

|

||||

MOVD state+0(FP), R0

|

||||

MOVD msg_base+8(FP), R1

|

||||

MOVD msg_len+16(FP), R2 // length of message

|

||||

SUBS $32, R2

|

||||

BMI complete

|

||||

|

||||

// Definition of registers

|

||||

// v0 = v0.lo

|

||||

// v1 = v0.hi

|

||||

// v2 = v1.lo

|

||||

// v3 = v1.hi

|

||||

// v4 = mul0.lo

|

||||

// v5 = mul0.hi

|

||||

// v6 = mul1.lo

|

||||

// v7 = mul1.hi

|

||||

|

||||

// Load zipper merge constants table pointer

|

||||

MOVD $·asmZipperMerge(SB), R3

|

||||

|

||||

// and load zipper merge constants into v28, v29, and v30

|

||||

VLD1 (R3), [V28.B16, V29.B16, V30.B16]

|

||||

|

||||

VLD1.P 64(R0), [V0.D2, V1.D2, V2.D2, V3.D2]

|

||||

VLD1 (R0), [V4.D2, V5.D2, V6.D2, V7.D2]

|

||||

SUBS $64, R0

|

||||

|

||||

loop:

|

||||

// Main loop

|

||||

VLD1.P 32(R1), [V26.S4, V27.S4]

|

||||

|

||||

UPDATE(V26, V27)

|

||||

|

||||

SUBS $32, R2

|

||||

BPL loop

|

||||

|

||||

// Store result

|

||||

VST1.P [V0.D2, V1.D2, V2.D2, V3.D2], 64(R0)

|

||||

VST1 [V4.D2, V5.D2, V6.D2, V7.D2], (R0)

|

||||

|

||||

complete:

|

||||

RET

|

||||

|

||||

// func finalizeArm64(out []byte, state *[16]uint64)

|

||||

TEXT ·finalizeArm64(SB), NOSPLIT, $0-32

|

||||

MOVD state+24(FP), R0

|

||||

MOVD out_base+0(FP), R1

|

||||

MOVD out_len+8(FP), R2

|

||||

|

||||

// Load zipper merge constants table pointer

|

||||

MOVD $·asmZipperMerge(SB), R3

|

||||

|

||||

// and load zipper merge constants into v28, v29, and v30

|

||||

VLD1 (R3), [V28.B16, V29.B16, V30.B16]

|

||||

|

||||

VLD1.P 64(R0), [V0.D2, V1.D2, V2.D2, V3.D2]

|

||||

VLD1 (R0), [V4.D2, V5.D2, V6.D2, V7.D2]

|

||||

SUB $64, R0

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

CMP $8, R2

|

||||

BEQ skipUpdate // Just 4 rounds for 64-bit checksum

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

CMP $16, R2

|

||||

BEQ skipUpdate // 6 rounds for 128-bit checksum

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

VREV64 V1.S4, V26.S4

|

||||

VREV64 V0.S4, V27.S4

|

||||

UPDATE(V26, V27)

|

||||

|

||||

skipUpdate:

|

||||

// Store result

|

||||

VST1.P [V0.D2, V1.D2, V2.D2, V3.D2], 64(R0)

|

||||

VST1 [V4.D2, V5.D2, V6.D2, V7.D2], (R0)

|

||||

SUB $64, R0

|

||||

|

||||

CMP $8, R2

|

||||

BEQ hash64

|

||||

CMP $16, R2

|

||||

BEQ hash128

|

||||

|

||||

// 256-bit checksum

|

||||

MOVD 0*8(R0), R8

|

||||

MOVD 1*8(R0), R9

|

||||

MOVD 4*8(R0), R10

|

||||

MOVD 5*8(R0), R11

|

||||

MOVD 8*8(R0), R4

|

||||

MOVD 9*8(R0), R5

|

||||

MOVD 12*8(R0), R6

|

||||

MOVD 13*8(R0), R7

|

||||

ADD R4, R8

|

||||

ADD R5, R9

|

||||

ADD R6, R10

|

||||

ADD R7, R11

|

||||

|

||||

REDUCE_MOD(R8, R9, R10, R11, R4, R5, R6, R7)

|

||||

MOVD R6, 0(R1)

|

||||

MOVD R7, 8(R1)

|

||||

|

||||

MOVD 2*8(R0), R8

|

||||

MOVD 3*8(R0), R9

|

||||

MOVD 6*8(R0), R10

|

||||

MOVD 7*8(R0), R11

|

||||

MOVD 10*8(R0), R4

|

||||

MOVD 11*8(R0), R5

|

||||

MOVD 14*8(R0), R6

|

||||

MOVD 15*8(R0), R7

|

||||

ADD R4, R8

|

||||

ADD R5, R9

|

||||

ADD R6, R10

|

||||

ADD R7, R11

|

||||

|

||||

REDUCE_MOD(R8, R9, R10, R11, R4, R5, R6, R7)

|

||||

MOVD R6, 16(R1)

|

||||

MOVD R7, 24(R1)

|

||||

RET

|

||||

|

||||

hash128:

|

||||

MOVD 0*8(R0), R8

|

||||

MOVD 1*8(R0), R9

|

||||

MOVD 6*8(R0), R10

|

||||

MOVD 7*8(R0), R11

|

||||

ADD R10, R8

|

||||

ADD R11, R9

|

||||

MOVD 8*8(R0), R10

|

||||

MOVD 9*8(R0), R11

|

||||

ADD R10, R8

|

||||

ADD R11, R9

|

||||

MOVD 14*8(R0), R10

|

||||

MOVD 15*8(R0), R11

|

||||

ADD R10, R8

|

||||

ADD R11, R9

|

||||

MOVD R8, 0(R1)

|

||||

MOVD R9, 8(R1)

|

||||

RET

|

||||

|

||||

hash64:

|

||||

MOVD 0*8(R0), R4

|

||||

MOVD 4*8(R0), R5

|

||||

MOVD 8*8(R0), R6

|

||||

MOVD 12*8(R0), R7

|

||||

ADD R5, R4

|

||||

ADD R7, R6

|

||||

ADD R6, R4

|

||||

MOVD R4, (R1)

|

||||

RET

|

||||

|

||||

DATA ·asmConstants+0x00(SB)/8, $0xdbe6d5d5fe4cce2f

|

||||

DATA ·asmConstants+0x08(SB)/8, $0xa4093822299f31d0

|

||||

DATA ·asmConstants+0x10(SB)/8, $0x13198a2e03707344

|

||||

DATA ·asmConstants+0x18(SB)/8, $0x243f6a8885a308d3

|

||||

DATA ·asmConstants+0x20(SB)/8, $0x3bd39e10cb0ef593

|

||||

DATA ·asmConstants+0x28(SB)/8, $0xc0acf169b5f18a8c

|

||||

DATA ·asmConstants+0x30(SB)/8, $0xbe5466cf34e90c6c

|

||||

DATA ·asmConstants+0x38(SB)/8, $0x452821e638d01377

|

||||

GLOBL ·asmConstants(SB), 8, $64

|

||||

|

||||

// Constants for TBL instructions

|

||||

DATA ·asmZipperMerge+0x0(SB)/8, $0x000f010e05020c03 // zipper merge constant

|

||||

DATA ·asmZipperMerge+0x8(SB)/8, $0x070806090d0a040b

|

||||

DATA ·asmZipperMerge+0x10(SB)/8, $0x0f0e0d0c07060504 // setup first register for multiply

|

||||

DATA ·asmZipperMerge+0x18(SB)/8, $0x1f1e1d1c17161514

|

||||

DATA ·asmZipperMerge+0x20(SB)/8, $0x0b0a090803020100 // setup second register for multiply

|

||||

DATA ·asmZipperMerge+0x28(SB)/8, $0x1b1a191813121110

|

||||

GLOBL ·asmZipperMerge(SB), 8, $48

|

||||

+161

@@ -0,0 +1,161 @@

|

||||

// Copyright (c) 2017 Minio Inc. All rights reserved.

|

||||

// Use of this source code is governed by a license that can be

|

||||

// found in the LICENSE file.

|

||||

|

||||

package highwayhash

|

||||

|

||||

import (

|

||||