mirror of

https://github.com/laurent22/joplin.git

synced 2026-05-04 13:30:08 -05:00

Chore: Server: Debug: Add debug benchmarkDeltaPerformance API (#13801)

This commit is contained in:

@@ -75,6 +75,11 @@ export default class UserItemModel extends BaseModel<UserItem> {

|

||||

.where('user_items.user_id', '=', userId);

|

||||

}

|

||||

|

||||

public async countWithUserId(userId: Uuid): Promise<number> {

|

||||

const count = await this.db(this.tableName).count('*').where('user_id', '=', userId);

|

||||

return count[0].count;

|

||||

}

|

||||

|

||||

public async byUserId(userId: Uuid): Promise<UserItem[]> {

|

||||

return this.db(this.tableName).where('user_id', '=', userId);

|

||||

}

|

||||

|

||||

@@ -8,6 +8,7 @@ import { SubPath } from '../../utils/routeUtils';

|

||||

import { AppContext } from '../../utils/types';

|

||||

import { ErrorForbidden } from '../../utils/errors';

|

||||

import createTestUsers, { CreateTestUsersOptions } from '../../tools/debug/createTestUsers';

|

||||

import benchmarkDeltaPerformance from '../../tools/benchmark/benchmarkDeltaPerformance';

|

||||

import createUserDeletions from '../../tools/debug/createUserDeletions';

|

||||

import clearDatabase from '../../tools/debug/clearDatabase';

|

||||

import populateDatabase from '../../tools/debug/populateDatabase';

|

||||

@@ -52,6 +53,10 @@ router.post('api/debug', async (_path: SubPath, ctx: AppContext) => {

|

||||

await models.keyValue().deleteAll();

|

||||

}

|

||||

|

||||

if (query.action === 'benchmarkDeltaPerformance') {

|

||||

await benchmarkDeltaPerformance(ctx.joplin.models);

|

||||

}

|

||||

|

||||

if (query.action === 'populateDatabase') {

|

||||

const size = 'size' in query ? Number(query.size) : 1;

|

||||

const actionCount = (() => {

|

||||

|

||||

@@ -0,0 +1,52 @@

|

||||

import { Models } from '../../models/factory';

|

||||

import { ShareType, Uuid } from '../../services/database/types';

|

||||

import recordBenchmark from './recordBenchmark';

|

||||

|

||||

const benchmarkDeltaPerformance = async (models: Models) => {

|

||||

const iterateUsers = async function*() {

|

||||

let page = 1;

|

||||

let hasMore = true;

|

||||

while (hasMore) {

|

||||

const batchSize = 50;

|

||||

const items = await models.user().allPaginated({ page: page++, limit: batchSize });

|

||||

hasMore = items.has_more;

|

||||

|

||||

const users = items.items;

|

||||

yield await Promise.all(users.map(async user => {

|

||||

const userItemCount = await models.userItem().countWithUserId(user.id);

|

||||

const shareCount = (await models.share().byUserId(user.id, ShareType.Folder)).length;

|

||||

|

||||

return {

|

||||

labels: {

|

||||

'User ID': user.id,

|

||||

'Share count': shareCount,

|

||||

'user_items count': userItemCount,

|

||||

'Total item size': user.total_item_size,

|

||||

},

|

||||

data: user.id,

|

||||

};

|

||||

}));

|

||||

}

|

||||

};

|

||||

|

||||

await recordBenchmark<Uuid>({

|

||||

taskLabel: 'full delta',

|

||||

batchIterator: iterateUsers(),

|

||||

trialCount: 10,

|

||||

outputFile: 'delta-perf-full.csv',

|

||||

runTask: async (userId) => {

|

||||

await models.change().delta(userId, { cursor: '', limit: 200 });

|

||||

},

|

||||

});

|

||||

await recordBenchmark<Uuid>({

|

||||

taskLabel: 'changes query',

|

||||

batchIterator: iterateUsers(),

|

||||

trialCount: 10,

|

||||

outputFile: 'delta-perf-query-only.csv',

|

||||

runTask: async (userId) => {

|

||||

await models.change().changesForUserQuery(userId, -1, 200, false);

|

||||

},

|

||||

});

|

||||

};

|

||||

|

||||

export default benchmarkDeltaPerformance;

|

||||

@@ -0,0 +1,104 @@

|

||||

import shim from '@joplin/lib/shim';

|

||||

import { getRootDir } from '@joplin/utils';

|

||||

import Logger from '@joplin/utils/Logger';

|

||||

import { writeFile } from 'fs/promises';

|

||||

|

||||

const logger = Logger.create('benchmark');

|

||||

|

||||

const computeAverage = (data: number[]) => {

|

||||

const total = data.reduce((a, b) => a + b, 0);

|

||||

return total / (data.length || 1);

|

||||

};

|

||||

|

||||

const computeStandardDeviation = (data: number[]) => {

|

||||

const average = computeAverage(data);

|

||||

// Variance(X) = average square distance from the mean

|

||||

// = average((x - average(X))^2 : for all (x in X))

|

||||

const variance = computeAverage(data.map((x) => Math.pow(x - average, 2)));

|

||||

const standardDeviation = Math.sqrt(variance);

|

||||

return standardDeviation;

|

||||

};

|

||||

|

||||

const computeStatistics = (data: number[]) => {

|

||||

return { average: computeAverage(data), standardDeviation: computeStandardDeviation(data) };

|

||||

};

|

||||

|

||||

type TrialLabel = Record<string, string|number>;

|

||||

interface LabelledInputs<DataPoint> {

|

||||

labels: TrialLabel;

|

||||

data: DataPoint;

|

||||

}

|

||||

|

||||

interface BenchmarkOptions<InputData> {

|

||||

// Brief label of the task (to be used for column headers

|

||||

// in the output file)

|

||||

taskLabel: string;

|

||||

// Should run the task once

|

||||

runTask: (dataPoint: InputData)=> Promise<void>;

|

||||

|

||||

// Returns data in groups

|

||||

batchIterator: AsyncIterable<LabelledInputs<InputData>[]>;

|

||||

// Number of times to re-test the performance of each group of data

|

||||

trialCount: number;

|

||||

|

||||

outputFile: string;

|

||||

}

|

||||

|

||||

const recordBenchmark = async <DataPoint> ({ batchIterator: inputData, trialCount: trials, outputFile, taskLabel, runTask: runTrial }: BenchmarkOptions<DataPoint>) => {

|

||||

logger.info('Creating benchmark for', outputFile);

|

||||

let page = 0;

|

||||

|

||||

const results = [];

|

||||

const dataPointToDurations = new Map<TrialLabel, number[]>();

|

||||

for await (const batch of inputData) {

|

||||

logger.info('Page', page, '. Preparing to process', batch.length, 'items...');

|

||||

page++;

|

||||

|

||||

for (let trial = 0; trial < trials; trial ++) {

|

||||

logger.info('Trial', trial);

|

||||

for (const item of batch) {

|

||||

const startTime = performance.now();

|

||||

await runTrial(item.data);

|

||||

const endTime = performance.now();

|

||||

const duration = endTime - startTime;

|

||||

|

||||

const values = dataPointToDurations.get(item.labels);

|

||||

if (values) {

|

||||

values.push(duration);

|

||||

} else {

|

||||

dataPointToDurations.set(item.labels, [duration]);

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

for (const [labels, durations] of dataPointToDurations) {

|

||||

const { average, standardDeviation } = computeStatistics(durations);

|

||||

|

||||

results.push({

|

||||

...labels,

|

||||

[`Avg. ${taskLabel} duration (ms)`]: average,

|

||||

[`standardDeviation(${taskLabel} duration) (ms)`]: standardDeviation,

|

||||

});

|

||||

}

|

||||

dataPointToDurations.clear();

|

||||

}

|

||||

|

||||

if (results.length === 0) {

|

||||

throw new Error('No data collected.');

|

||||

}

|

||||

|

||||

|

||||

const resultCsv = [

|

||||

Object.keys(results[0]).join(','),

|

||||

...results.map(result => Object.values(result).join(',')),

|

||||

].join('\n');

|

||||

|

||||

const outputDir = `${await getRootDir()}/packages/server/benchmarks`;

|

||||

await shim.fsDriver().mkdir(outputDir);

|

||||

const outputPath = await shim.fsDriver().resolveRelativePathWithinDir(outputDir, outputFile);

|

||||

|

||||

await writeFile(outputPath, resultCsv);

|

||||

logger.info('Done. Wrote output to', outputPath);

|

||||

};

|

||||

|

||||

export default recordBenchmark;

|

||||

@@ -1,4 +1,6 @@

|

||||

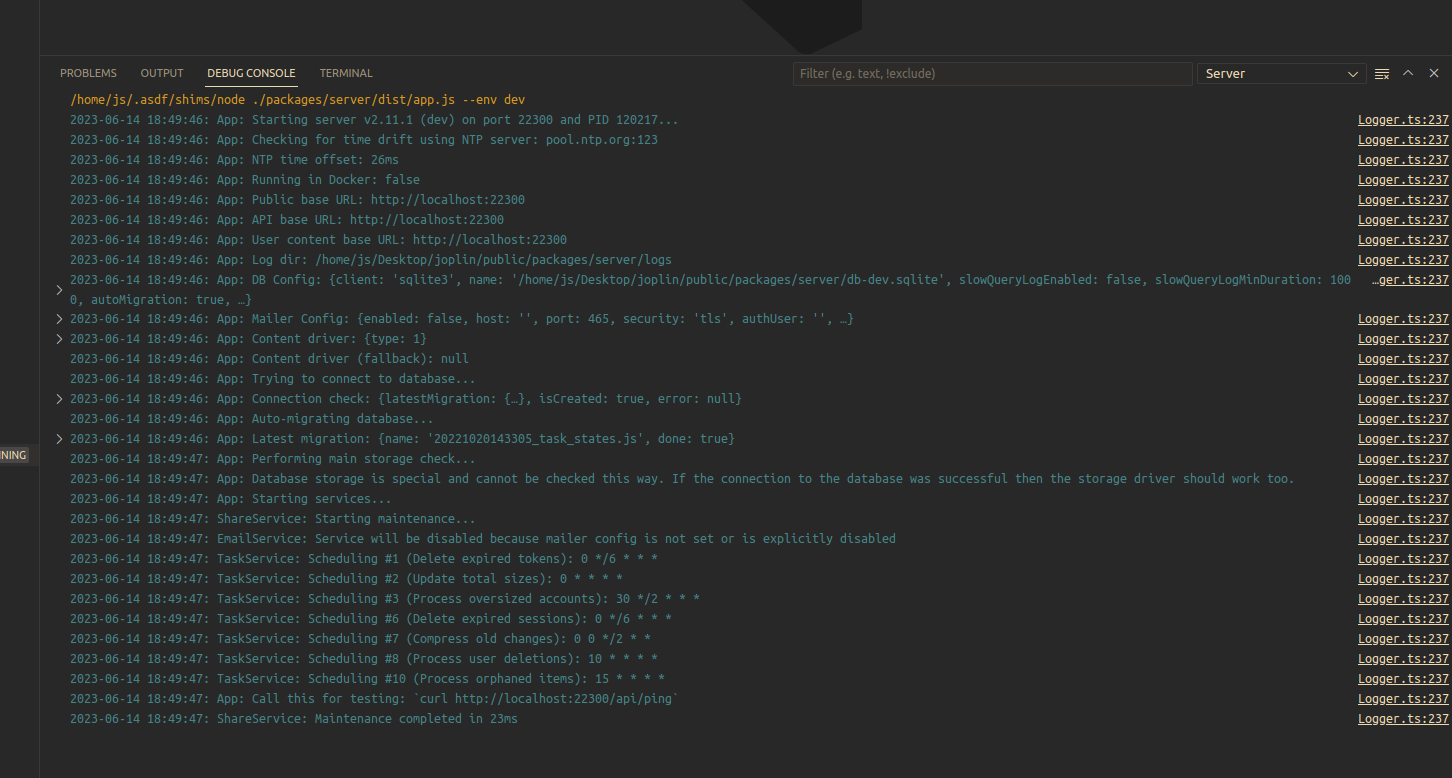

# Debugging Server project with vscode

|

||||

# Debugging Server project

|

||||

|

||||

## Debugging with vscode

|

||||

|

||||

Using a debugger sometimes is much easier than trying to just print things to understand a bug,

|

||||

for the server project we have a configuration that makes it easy for everyone to run in debug mode inside vscode.

|

||||

@@ -42,3 +44,12 @@ the configuration into two files, one for the `launch.json` and other for the `t

|

||||

|

||||

|

||||

|

||||

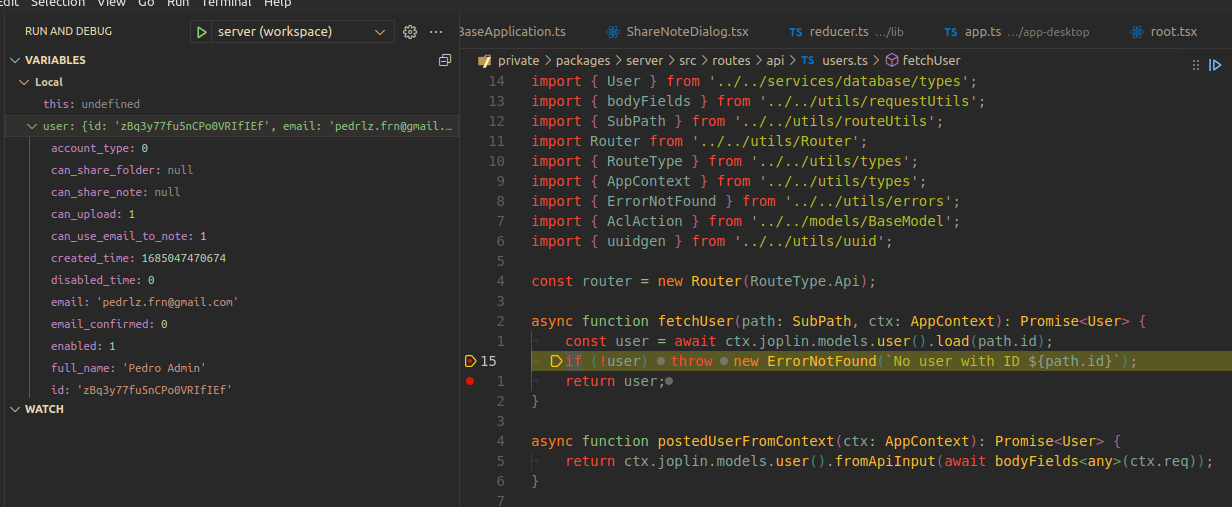

## Running debug commands

|

||||

|

||||

When running in development mode, several debug commands can be run by sending requests to `/api/debug`. These include:

|

||||

- The `populateDatabase` command adds content to the database, creating test users with initial data.

|

||||

- Among other things, this allows testing how Joplin Server handles a large number of users, items, and changes. A larger `size` parameter creates more items. `size` can be either 1, 2, or 3.

|

||||

- Example: `curl --data '{"action": "populateDatabase", "size": 2}' -H 'Content-Type: application/json' http://localhost:22300/api/debug`.

|

||||

- The `benchmarkDeltaPerformance` command tests the performance of `models.change().delta` by calling `delta` multiple times for each user account. Data is saved in `packages/server/delta-perf.csv`.

|

||||

- `ChangeModel.delta` is called during sync and has historically been a performance bottleneck.

|

||||

- Example: `curl --data '{"action":"benchmarkDeltaPerformance"}' -H 'Content-Type: application/json' http://localhost:22300/api/debug`.

|

||||

|

||||

Reference in New Issue

Block a user